Artificial Intelligence is no longer limited to innovation labs. It now drives core systems across industries—supporting decisions, automating workflows, and shaping customer interactions. As this reliance grows, so does the need to ensure that these systems behave in a controlled and accountable manner.

Without structured oversight, AI can introduce risks that are difficult to detect and even harder to correct once deployed.

AI governance provides that structure. It clearly defines how teams build, use, and monitor AI systems so they remain aligned with regulatory requirements and organizational expectations. More importantly, it creates a foundation that allows teams to scale AI adoption safely and with confidence.

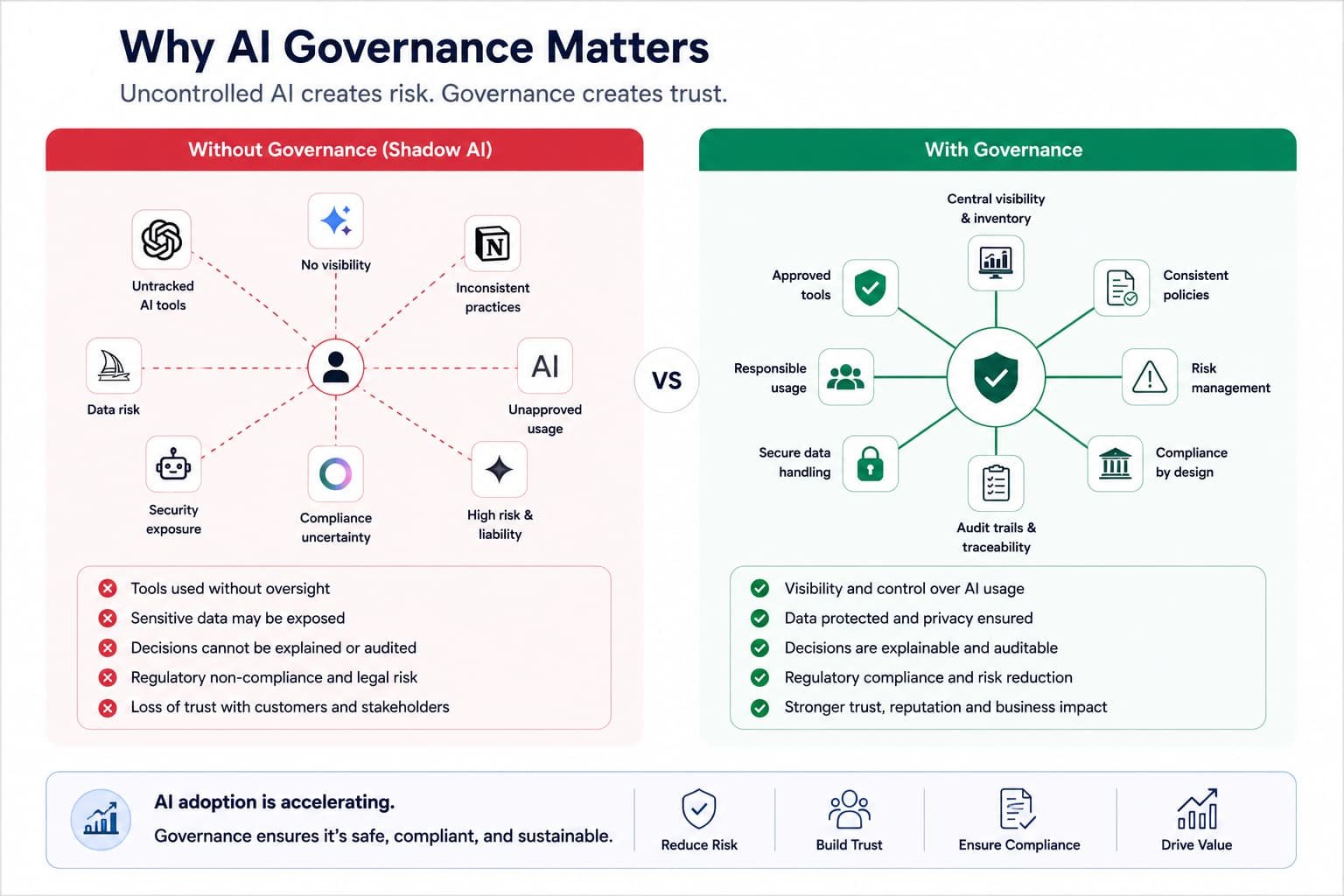

Why AI Governance Matters

Governance turns AI risk into control

The accessibility of AI tools has changed how quickly systems are adopted. Platforms such as ChatGPT enable teams to integrate AI into workflows with minimal setup. While this improves productivity, it also creates “shadow AI”—inconsistent and often ungoverned use across departments.

At the same time, regulatory expectations have become clearer. Frameworks like the EU AI Act and GDPR require organizations to demonstrate strong control over their AI systems. This includes how data is handled, how decisions are made, and how risks are managed.

Beyond compliance, governance also affects trust. Organizations that cannot explain or justify AI-driven decisions risk losing credibility with customers, regulators, and stakeholders.

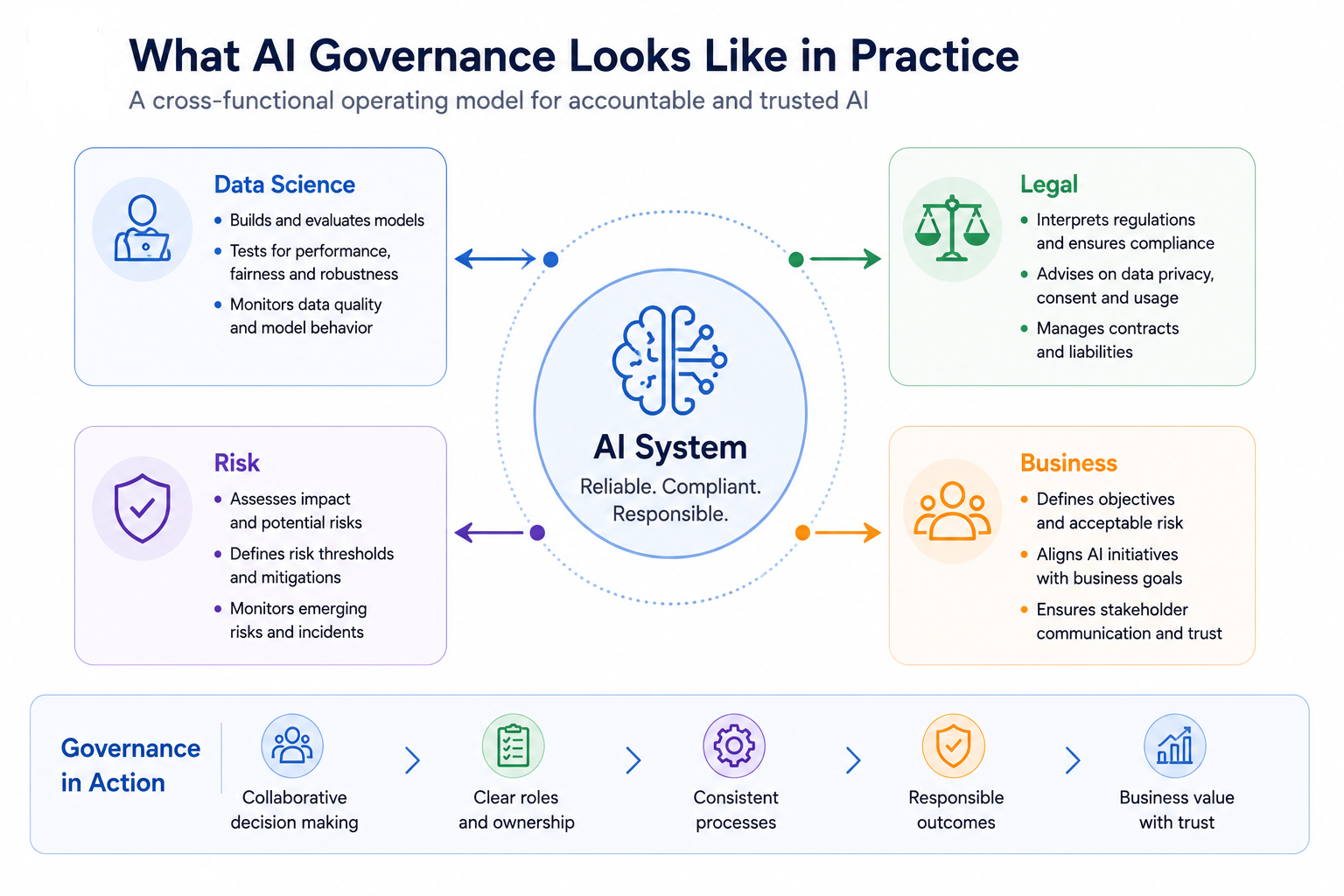

What AI Governance Looks Like in Practice

Governance requires cross-team coordination

AI governance is not a single policy or checklist. It is a coordinated system that connects technical processes with oversight mechanisms. This includes clearly assigning ownership, evaluating performance, and applying necessary controls before deployment.

In practice, governance requires ongoing involvement from different teams. Data scientists focus on model performance, legal teams interpret compliance requirements, risk managers assess impact, and business leaders define acceptable risk levels. Without this coordination, governance efforts often become fragmented and ineffective.

A well-implemented governance model ensures that decisions are not made in isolation. It creates a shared structure where technical and non-technical teams can operate with clarity and consistency.

Core Components of a Governance Framework

A complete AI governance framework typically includes the following elements:

- Defined ownership: Every AI system must have a clear owner responsible for its behavior, performance, and outcomes

- Risk classification: Teams categorize systems based on their potential impact (e.g., low, medium, high), guiding the level of oversight required

- Policy enforcement: Teams document clear rules for data usage, model validation, bias testing, and deployment

- Monitoring systems: Systems continuously track performance, data drift, model decay, and anomalies in production environments

- Audit mechanisms: Comprehensive documentation and logs that enable teams to review decisions and processes.

These components work together to ensure that governance is not reactive but built into the system from the start.

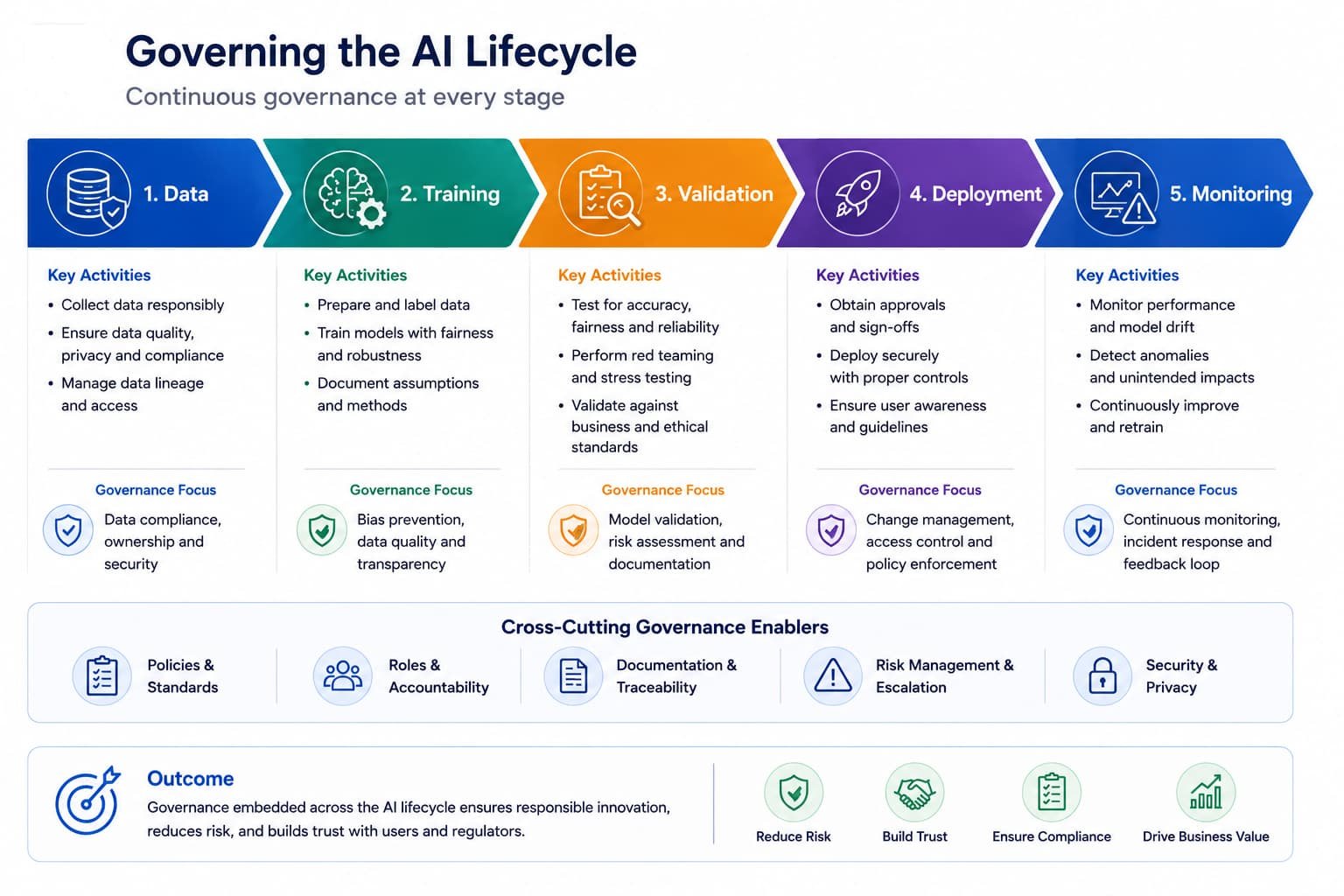

Governing the AI Lifecycle

Governance across the AI lifecycle

AI governance must extend across the entire lifecycle of a system. Each stage introduces different risks and requires specific controls.

During the early stages, the focus is on data. Ensuring that data is accurate, properly sourced, diverse, and compliant with regulations is critical. Errors at this stage often carry forward into the model and become expensive to correct later.

As development progresses, attention shifts to validation and testing. Teams evaluate models not only for accuracy but also for fairness, robustness, and reliability under different conditions. Once deployed, teams continuously monitor systems to detect changes in behavior or performance over time.

This lifecycle approach ensures that governance is continuous. It avoids the common mistake of treating governance as a final checkpoint before deployment.

AI Compliance: From Policy to Execution

Compliance in AI requires translating legal requirements into technical implementation. It is not enough to define policies—they must be enforced through systems and processes.

Key areas of focus include:

- Data handling: Ensuring lawful collection, storage, processing, and deletion of data

- Access control: Limiting who can view or modify sensitive data and models

- Traceability: Maintaining records of how models are trained, datasets used, and decisions are made

- Explainability: Providing clear reasoning behind automated decisions, especially in high-stakes scenarios

- Audit readiness: Ensuring that systems can be reviewed by internal or external auditors at any time.

Organizations that embed these controls into their systems are better prepared to meet regulatory expectations without disrupting operations.

Responsible AI: Making Ethics Operational

Responsible AI is often discussed in broad terms, but it becomes meaningful only when applied in real systems. One of the most critical areas is bias management. Models must be tested across different demographic groups to ensure that outcomes are not systematically skewed or unfair.

Another important factor is human oversight. AI systems should not operate without accountability, especially in high-impact scenarios such as lending, hiring, or healthcare. Introducing review mechanisms ensures that decisions can be validated before they are finalized.

Organizations also benefit from aligning with established guidelines from bodies such as OECD and NIST. These frameworks provide practical direction on implementing fairness, transparency, and accountability.

Scaling AI Governance

As AI adoption increases, governance must expand without slowing development. This requires a shift from manual oversight to structured and repeatable processes.

Standardization is one of the most effective approaches. When teams use predefined templates, risk assessment questionnaires, and approval workflows, they can follow governance requirements without interpreting them from scratch each time. This reduces delays and improves consistency.

Automation further strengthens this approach. By integrating governance checks into development pipelines (CI/CD), organizations can enforce policies automatically. This ensures that compliance and quality standards are maintained even as the number of AI systems grows rapidly.

Challenges Organizations Face

Despite clear benefits, implementing AI governance is not straightforward. One of the most common issues is unclear ownership. When multiple teams are involved, responsibility can become diluted, leading to gaps in oversight.

Another challenge is keeping pace with change. AI technologies and use cases evolve quickly, and regulations continue to develop. Organizations must regularly review and update their governance practices.

There is also a skills gap. Effective governance requires knowledge of technology, law, ethics, and risk management. Building teams with this combined expertise takes time and investment.

The Business Impact of AI Governance

AI governance directly affects how quickly and safely organizations can adopt AI. With clear processes in place, teams can innovate faster, knowing that risks are being actively managed.

It also improves reliability. Systems that are properly monitored and controlled perform more consistently, reducing the likelihood of unexpected failures or costly incidents. Over time, this leads to better business outcomes and fewer disruptions.

Most importantly, governance builds trust. When organizations can explain how their AI systems work and demonstrate responsible practices, they strengthen relationships with customers, partners, and regulators.

Conclusion

AI governance is essential for organizations that rely on AI systems. It connects compliance, operational processes, and technical controls into a structured approach that supports both growth and accountability.

As AI continues to expand across industries, governance will determine how effectively organizations can manage risk while continuing to innovate. Those that establish strong governance frameworks early will be better positioned to scale their AI initiatives with confidence.